|

Child volumes that don't specify compression option will inherit from parent volume. Method used to compress the data on the volume. Copy Tags To Snapshots boolĪ boolean flag indicating whether tags for the file system should be copied to snapshots. Trust OpenZFS to make good of object storage.The volume id of volume that will be the parent volume for the volume being created, this could be the root volume created from the resource with the root_volume_id or the id property of another. As this implementation matures becomes an OpenZFS feature, it would be able to work with AWS’s S3 storage and other S3 compatible cloud storage. Think about having a highly transactional server housed on-premises using all kinds of storage services – SAN, NAS, S3 – everywhere, with OpenZFS as the sole aggregator and champion of its data. The possibilities, when this OpenZFS with object storage is in the next OpenZFS version release, is very exciting. This new addition of integration with object storage provides the best of both world where stateful applications do not leave the comfort settings, and still be able to take advantage the benefits of object storage. These applications work comfortably and confidently with POSIX-compliant filesystems and NAS and SAN storage services. Many stateful applications such as databases demand ACID of atomicity, consistency, isolation and durability. The momentum can only get bigger from here on, and the outcome, better than before! New possibilities It will not be a flip of a switch, but the ball has started rolling. There are still a lot of thoughts, considerations and improvements to bring OpenZFS on object storage to an optimal stage. The Zetta Cache video presentation is below: There are plenty more, and these are shared in the video from the OpenZFS Developer Summit 2021 below:

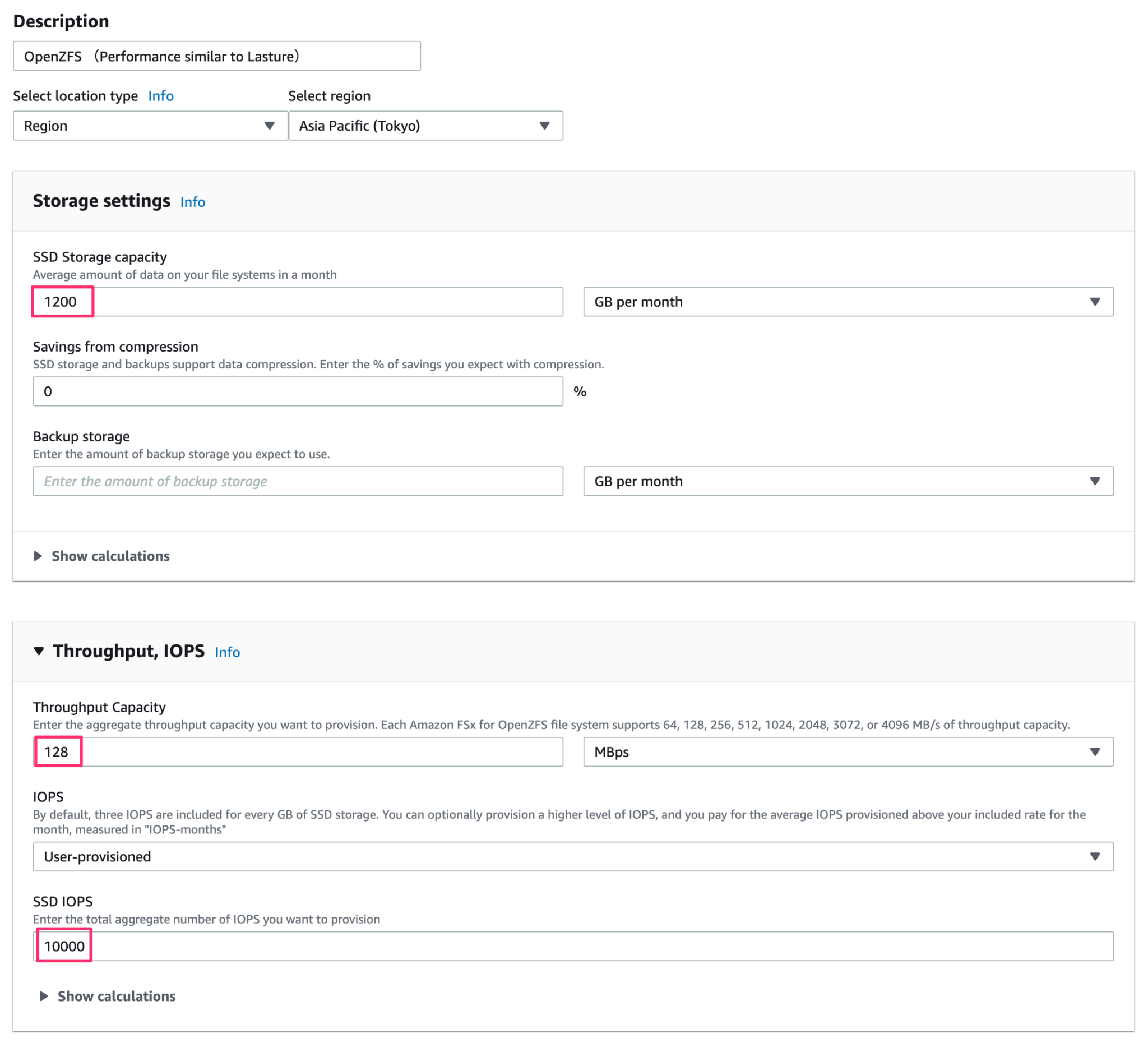

What I have shared so far are just the tip of the iceberg. I want to say that I am not doing enough justice to what I have written here. The cache is populated and unpopulated over an LRU (least recently used) algorithm to keep things efficiently. It stores the indexed mapping of the Block IDs-to-Object IDs (though not the entire map), metadata related to the blocks and objects allocated as well as frequently requested object data and written data blocks. It has several functions in order to improve the responsiveness to the Object Store. Lastly, Zetta Cache is a quasi, Unix-like filesystem implementation that should run on persistent storage media. Zetta Object also manages the S3 communication. This is to improve performance, especially when dealing with small block sized (< 16KB) transactional workloads. Several blocks may be coalesced into one object. Note that the mapping of blocks to objects are not 1-1 pairing. It handles the pairing of both worlds, and the mapping of Block IDs to Object IDs. The Zetta Object is critical because it is the “translator” or the bridge between ZFS filesystem and the object storage. Instead of the usual hard disk drives or SSDs or block devices, the Object Store vdev links the block I/O calls, reads and writes to the ZFS I/O scheduler (ZIO) to the object storage backend.īetween the Object Store and the object storage back end is the Zetta Object which converts the block I/O requests into object storage requests. There is a new vdev (virtual device) structure in OpenZFS called Object Store. These are the 3 new foundational ZFS components, named Object Store, Zetta Object and Zetta Cache. I have highlighted 3 green boxes in the architecture diagram above. OpenZFS architecture with object storage integration Newcomer components In the recently concluded OpenZFS Developer Summit 2021, one of the topics was “ ZFS on Object Storage“, and the short answer is a resounding YES! Can OpenZFS integrate with an S3 object storage backend? This blog looks into the burning question. It is about what is coming, and eventually AWS FSx for OpenZFS could expand into AWS’s proficient S3 storage as well. This is one hell of a filesystem.īut this blog isn’t about AWS FSx for OpenZFS with block storage. It is an absolutely joy (for me) to see the open source OpenZFS filesystem getting the validation and recognization from AWS. I am assuming the AWS OpenZFS uses EBS as the block storage backend, given the announcement that it can deliver 4GB/sec of throughput and 160,000 IOPS from the “drives” without caching. How the OpenZFS is provisioned to the AWS clients is well documented in this blog here.

The highly scalable OpenZFS filesystem can provide high throughput and IOPS bandwidth to Amazon EC2, ECS, EKS and VMware® Cloud on AWS. This is the 4th managed service under the Amazon FSx umbrella, joining NetApp® ONTAP™, Lustre and Windows File Server.

At AWS re:Invent last week, Amazon Web Services announced Amazon FSx for OpenZFS.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed